The rise of the Natural User Interfaces (NUIs) such as speech-, gaze-, and gesture recognition, as well as the skyrocketing adoption of connected devices such as smart speakers and wearables, has brought in the age of multi-modal interactions. They allow us to create beautifully complex transitions between touchpoints, devices, and input modalities. But tackling such interfaces can be scary and overwhelming. In this talk, I share lessons I’ve learned from creating such experiences for the medical professionals.

Talk Gallery

More Talks by Anna

Gallery

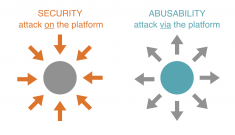

Abusability Testing Workshop

TopicsEthics, Storytelling

Storytelling workshop aimed at unveiling pitfalls of otherwise exciting technologies. ...

VideoGallery

Optimistic Approach to Notifications

TopicsDesign Strategy, Methodology, Product Development

Hey user, allow push notifications? Well, I would, if you didn't treat me like a child. ...

VideoGallery

Designing Speech-Driven Interfaces

TopicsInnovation, Methodology, Product Development, Voice UI

It seems like speech recognition is everywhere these days—in our phones, cars, and...

Discover More Talks

More talks

Slides

Bias, Uncovered

DisciplineUser Experience

TopicsCommunication, Diversity & Inclusion, Ethics, Leadership, Management & Strategy

Speaker Crystal Yan

Are you ready to confront bias? In this talk, you’ll be challenged to uncover your...

VideoSlides

‘Tidying Up’ Design

DisciplineProduct Design

TopicsDesign Thinking

Speaker Bella Olszewski

Marie Kondo's 'Tidying up' has hit Netflix and the internet by storm. This talk will...

VideoGeneral Info

Designing for Perspectives

DisciplinesBusiness, User Research

TopicsCulture, Data

IndustryArts & Entertainment

Speaker Tricia Wang

We in the west have long had the bad habit of confusing our individual perspective...

Speakers, Get Featured

Get connected with event organizers interested in engaging women and gender non-binary speakers in design and tech.

Sign UpAlready have an account?

Log inKnow a great speaker? Let us know so we can invite them to be part of our directory and amplify their voice.

Nominate them